Difficult post today, friends, and subtle. But a necessary one for the folks who still use p-values.

Some two and a half years ago I posted this article: “An Infinity of Null Hypotheses — Another Anti-P-Value Argument“. The title is unfortunate; or, rather, the subtitle is. It should have read “The Anti-P-Value Argument.”

It is the and not another because no other argument is needed.

Here it is in brief:

The P-value is used only sometimes and arbitrarily for deciding between probability models, whereas others times decisions are made using logical probability, but logical probability and P-value theory are incompatible: to be consistent, P-values should be used for every decision, but this is impossible; therefore, P-values should never be used.

Unless a probability model can be deduced from definite premises, then it must be itself uncertain, and if it is itself uncertain, the model should be decided by P-value, which never happens, and is indeed impossible, since the number of possible models is infinite.

Therefore, some other mechanism must be used to choose uncertain models. It cannot be P-values, but it can be probability.

By models deduced from definite premises, I mean models deduced from premises like these: There are n_1 white balls, n_2 yellow balls, and n_3 blue balls in this bag, and only one will be drawn out. We deduce the probability model: the chance a white ball s drawn is n_1/n, where n is the sum of n_1, n_2, and n_3.

There are no real disputes about choosing models of this kind, even if not everybody agrees on what the probabilities deduced in the model means. By choosing I mean people do not really sit down and seriously consider picking between the model we deduced and, say, some parameterized multinomial or large-sample normal approximation or whatever.

But there are disputes galore about models that cannot be deduced. Consider a model of weight loss between males and females who are made to adhere to some diet.

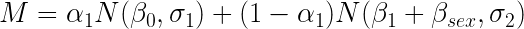

How shall that be modeled? We cannot deduce—a strict word!—a model, but it’s simple to propose one, such as a normal distribution model of the weight loss, with one parameter indicating the difference in the normal central parameter indicating sex.

But that is only one possible model. A gamma distribution model can be used instead. Or a T-distribution model, or on and on and on, and so forever.

There is a veritable endless sea of potential models. And it doesn’t make one whit of difference whether any one of the fits better than another, or one makes superior predictions according to some utility function, or whatever.

The point is rather that all these models, except for one, or a small handful, have been rejected by a mechanism that is not a P-value. Indeed, they have been rejected using logical probability.

In order to select a single model, or perhaps some subset, to be consistent with frequentist theory we need to test a series of “null hypotheses”, which are that certain parameters that select a model, or set the chance of it to be zero, for an infinite number of models.

We must do this because the model itself is uncertain. It is not deduced. It has uncertainty.

Yes, we can, and people do, all the time, plop down any old model, justified by custom or symmetry, or other considerations that are far removed from deduction, but that only means they do not take P-values seriously. That they only invoke them when they help their case.

For instance, inside the normal model mentioned above, on the parameter indicating sex. Which really means the P-value is used on just two models. In somewhat crude notation, we can write our ad hoc model M:

where each αi can only take the values 0 or 1. The “null” is that α1 = 1, and by fiat all the other models are disallowed. Usually, of course, the “null” is written that βsex=0 in only the second model. But that notation is tricky and misleading, because, as I hope is now clear, there are two models, and not just one.

(Skip this paragraph if you have to.) The “β0” and “β1” (and σs) are not the same, though they are usually taken to be in the way these models are written. I differ because I say any change in a model gives a different model. And consider the values chosen (“estimated”) for β0 and β1 will not be the same.

I hope now the context of the original post makes more sense. For that equation we just wrote can, and must, be expanded for an infinite number of other parameters that are left out without the benefit of hypothesis testing.

Notice that model choice is no problem at all in logical probability. Because probabilities are only formed on premises assumed. If we cannot deduce a model, we can with perfect consistency say “I am entertaining this limited class of models because of experience”, which sets a probability of zero on all unused models.

Since most models are not deduced, these probability judgments are local and not universal truths. And that is how we go about in daily life, forming (usually unquantified) probabilities, making judgments and decisions.

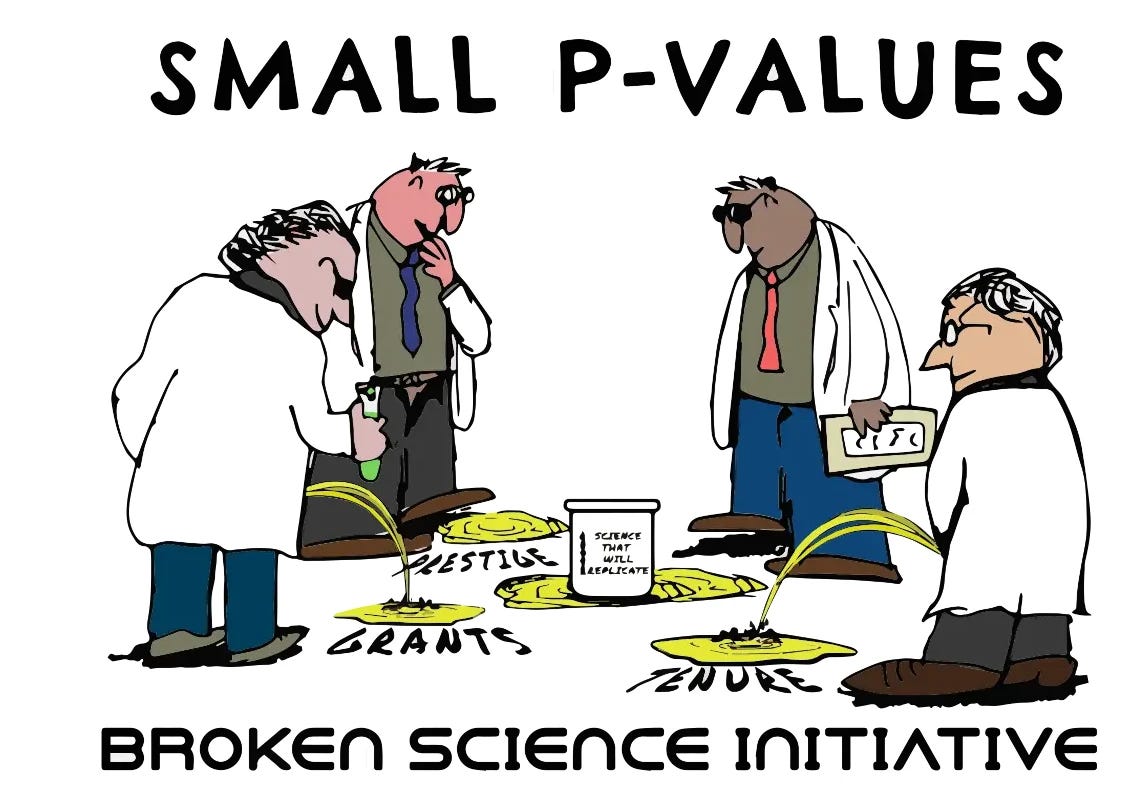

P-values are silly. Give them up.

Buy my new book and learn to argue against the regime: Everything You Believe Is Wrong.

You write like a Medieval philosopher.

I love it!

People don't dare treading anywhere near this style of reasoning.

Witty and useful! You and I are working on the same project (enabling people to make informed decisions).

Reminds me of my experience with "science" (yes, I am a "scientist"):

https://rayhorvaththesource.substack.com/p/freaks-of-science

And, of course, my recent article is also relevant:

https://rayhorvaththesource.substack.com/p/you-are-constantly-being-misled