How Smoothing Time Series Generates Massive Over-Certainty

Day four of the week of classical posts on global warming, now “climate change”, a subject which I had hoped had faded into obscurity, but, alas, has not. Your author has many bona fides and much experience in this field: see this.

Announcement. I am on vacation this week preparing for the Cultural Event of the Year. This post originally ran 4 July 2009. This exposes a common blunder wherever time series are used. Replacing Reality with a model, and then acting like the model is Real. Where have we heard this before? The post’s original title was “Do NOT smooth time series before computing forecast skill”.

Somebody at Steve McIntyre’s Climate Audit kindly linked to an old article of mine entitled “Do not smooth series, you hockey puck!”, a warning that Don Rickles might give.

Smoothing creates artificially high correlations between any two smoothed series. Take two randomly generated sets of numbers, pretend they are time series, and then calculate the correlation between the two. Should be close to 0 because, obviously, there is no relation between the two sets. After all, we made them up.

But start smoothing those series and then calculate the correlation between the two smoothed series. You will always find that the correlation between the two smoothed series is larger than between the non-smoothed series. Further, the more smoothing, the higher the correlation.

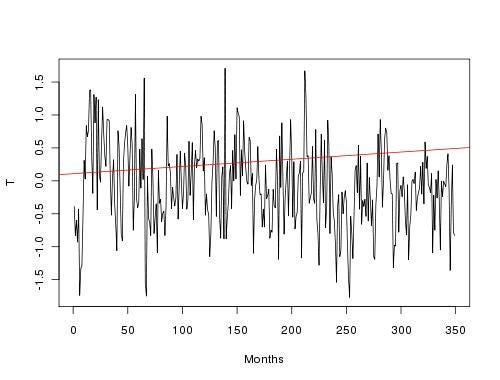

The same warning applies to series that will be used for forecast verification, like in the picture below (which happens to be the RSS satellite temperature data for the Antarctic, but the following works for any set of data).

The actual, observed, real data is the jagged line. The red line is an imagined forecast. Looks poor, no? The R^2 correlation between the forecast and observation is 0.03, and the mean squared error (MSE) is 51.4.

That jagged line hurts the eyes, doesn’t it? I mean, that can’t be the “real” temperature, can it? The “true” temperature must be hidden in the jagged line somewhere. How can we “find” it? By smoothing the jagginess away, of course! Smoothing will remove the peaks and valleys and leave us with a pleasing, soft line, which is not so upsetting to the aesthetic sense.

All, nonsense. The real temperature is the real temperature is the real temperature, so to smooth is to create something that is not the real temperature but a departure from the real temperature. There is no earthly reason to smooth actual observations. But let’s suppose we do and then look at the correlation and the MSE verification statistics and see what happens.

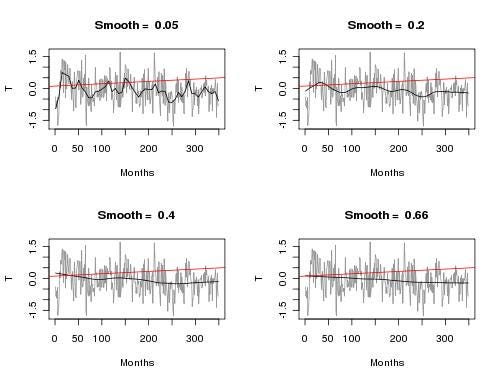

We’ll use a loess smoother (it’s easy to implement), which takes a “span” parameter: larger values of the span indicate more smoothing. The following picture demonstrates four different spans of increasing smoothiness. You can see that the black, smoothed line becomes easier on the eye, and gets “closer” in style and shape to the red forecast line.

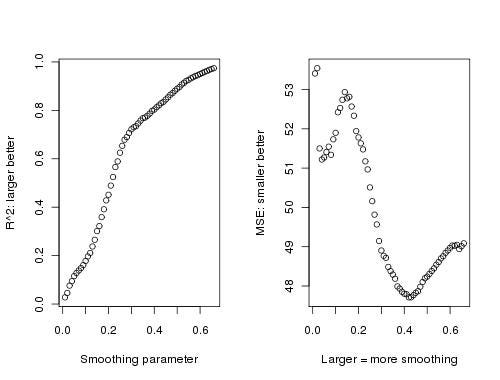

How about the verification statistics? That’s in the next picture: on the left is the R^2 correlation, and on the right is the MSE verification measure. Each is shown as the span, or smoothing, increases.

R^2 grows from near 0 to almost 1! If you were trying to be clever and say your forecast was “highly correlated” with the observations, you need only say “We statistically smoothed the observations using the default loess smoothing parameter and found the correlation between the observations and our forecast was nearly perfect!” Of course, what you should have said is the correlation between your smoothed series and your forecast is high. But so what? The trivial difference in wording is harmless, right? No, smoothing always increases correlation—even for obviously poor forecasts, such as our example.

The effect on MSE is more complicated, because that measure is more sensitive to the wiggles in the observation/smoothed series. But in general, MSE “improves” as smoothing increases. Again, if you want to be clever, you can pick a smoothing method that gives you the minimum MSE. After all, who would question you? You’re only applying standard statistical methods that everybody else uses. If everybody else is using them, they can’t be wrong, right? Wrong.

Our moral: always listen to Don Rickles. And Happy Fourth of July!

Update: July 5th

The results here do not depend strongly on the verification measure used. I picked MSE and R^2 only because of their popularity and familiarity. Anything will work. I invite anybody to give it a try.

The correlation-squared, or R^2, between any two straight lines (of non-zero) slope is 1 or -1. The more smoothing you apply to a series, the closer to a straight line it gets. The “forecast” I made was already a straight line. The observations become closer and closer to a straight line the more smoothing there is. Thus, the more smoothing, the higher the correlation.

Also, to about frequency spectra, or measuring signals with noise, miss the point. Techniques to deal with those subjects can be valuable, but the uncertainty inherent in them must be carried through to the eventual verification measure, something which is almost never done. We’ll talk about this more later.

Subscribe or donate to support this site and its wholly independent host using credit card click here. Or use the paid subscription at Substack. Cash App: $WilliamMBriggs. For Zelle, use my email: matt@wmbriggs.com, and please include yours so I know who to thank.